What is Docker Swarm?

Docker Swarm1 is a utility that is used to create a cluster of Docker hosts that can be interacted with as if it were a single host. I was introduced to it a few days before it was announced at DockerCon EU2 at the Docker Global Hack Day3 that I participated in at work. During the introduction to hackday, a few really cool new technologies were announced4 including Docker Swarm, Docker Machine, and Docker Compose. Since Ansible fills the role of Machine and Compose, Swarm stuck out as particularly interesting to me.

Victor Vieux and Andrea Luzzardi announced the concept and demonstrated the basic workings of Swarm during the intros and made a statement that I found to be very interesting. They said that though the POC (proof of concept) was functional and able to demo, they were going to throw away all of that code and start from scratch. I thought that was great and try to keep that in mind when POC’ing a new technology.

The daemon is written in Go and at this point in time latest commit a0901ce8d6 is definitely Alpha software. Things are moving at a very rapid pace at this point in time and functionality + feature set vary almost daily. That being said, @vieux is extremely responsive with adding functionality and fixing bugs via GitHub Issues5. I would not recommend using it in production yet, but it is a very promising technology.

How does it work

Interacting with and operating Swarm is (by-design) very similar to dealing with

a single Docker host. This allows interoperability with existing toolchains

without having to make too many modifications (the major ones being splitting

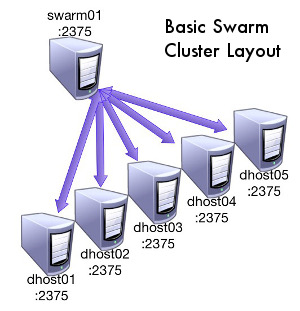

builds off of the Swarm cluster). Swarm is a daemon that is run on a Linux

machine bound to a network interface on the same port that a standalone Docker

instance (http/2375 or https/2376) would be. The Swarm daemon accepts connections

from the standard Docker client >=1.4.0 and proxies them back to the Docker

daemons configured behind Swarm which are also listening on the standard Docker

ports. It can distribute the create commands based on a few different packing

algorithms in combination with tags that the Docker daemons have been started

with. This makes the creation of a partitioned cluster of heterogeneous Docker

hosts that is exposed as a single Docker endpoint extremely simple.

Interacting with Swarm is ‘more or less’ the same as interacting with a single non-clustered Docker instance, but there are a few caveats. There is not 1-1 support for all Docker commands. This is due to both architectural and time based reasons. Some commands are just not implemented yet and I would imagine some might never be. Right now almost everything needed for running containers is available, including (amongst others):

docker rundocker createdocker inspectdocker killdocker logsdocker start

This subset is the essential part of what is needed to begin playing with the tool in runtime. Here is an overview of how the technologies are used in the most basic configuration:

- The Docker hosts are brought up with

--label key=valuelistening on the network. - The Swarm daemon is brought up and pointed at a file containing a list of the Docker hosts that make up the cluster as well the ports they are listening on.

- Swarm reaches out to each of the Docker hosts and determines their tags, health, and amount of resources in order to maintain a list of the backends and their metadata.

- The client interacts with Swarm via it’s network port (2375). You interact with Swarm the same way you would with Docker: create, destroy, run, attach, and get logs of running containers amongst other things.

- When a command is issued to Swarm, Swarm:

- decides where to route the command based off of the provided

constrainttags, health of the backends, and the scheduling algorithm. - executes the command against the proper Docker daemon

- returns the result in the same format as Docker does

- decides where to route the command based off of the provided

The Swarm daemon itself is only a scheduler and a router. It does not actually run the containers itself meaning that if Swarm goes down, the containers it has provisioned are still up on the backend Docker hosts. In addition, since it doesn’t handle any of the network routing (network connections need to be routed directly to the backend Docker host) running containers will still be available even if the Swarm daemon dies. When Swarm recovers from such a crash, it is able to query the backends in order to rebuild its list of metadata.

Due to the design of Swarm, interaction with Swarm for all runtime activities is just about the same as it would be for other Docker daemon: the Docker client, docker-py, docker-api gem, etc.. Build commands have not yet been figured out, but you can get by for runtime today. Unfortunately at this exact time Ansible does not seem to work with Swarm in TLS mode6, but it appears to affect the Docker daemon itself not just Swarm.

This concludes the 1st post regarding Docker Swarm. I apologize for the lack of technical detail, but it will be coming in subsequent posts in the form of architectures, snippets, and some hands-on activities :) Look out for Part 2: Docker Swarm Configuration Options and Requirements coming soon!

All of the research behind these blog posts was made possible due to the awesome company I work for: Rally Software in Boulder, CO. We get at least 1 hack week per quarter and it enables us to hack on awesome things like Docker Swarm. If you would like to cut to the chase and directly start playing with a Vagrant example, here is the repo that is the output of my Q1 2014 hack week efforts:

-

https://github.com/docker/swarm ↩

-

http://blog.docker.com/2015/01/dockercon-eu-introducing-docker-swarm/ ↩

-

http://www.meetup.com/Docker-meetups/events/148163592/ ↩

-

http://blog.docker.com/2014/12/announcing-docker-machine-swarm-and-compose-for-orchestrating-distributed-apps/ ↩

-

https://github.com/docker/swarm/issues ↩

-

https://github.com/ansible/ansible/issues/10032 ↩